Looking Through the Glass Box

- What happens when our everyday metaphors make a tool sound like a thinker?

- How do I teach literacy in a landscape where tools are routinely described as if they have inner lives?

- What happens to meaning when no one intended it?

- If eloquence is computable, what is left for the human writer?

- How do I navigate a system that is transparent yet alien, accessible yet incomprehensible, and competent yet mindless?

- Where is the human labor hidden inside the 'artificial' intelligence?

- Can a machine built to predict the statistical past ever truly help us imagine a different future?

What This Essay Covers

The outputs on this site don't have authors. Yet, the discourse about AI keeps inventing them anyway. Discourse Depot is where I try to hold both facts in view at once.

This is a reflective essay, part origin story, part pedagogical argument. It's long because I'm working through ideas instead of presenting conclusions. Here's what you'll find:

- How disappointment with AI outputs became a diagnostic tool

- The strange problem of meaning without authorship

- Why anthropomorphic language matters more than it seems

- The difference between describing how something works and explaining why it acts

- AI refusal politics

- What this project is (and isn't) trying to do

Each output, particularly from the metaphor, anthropomorphism and explanation audit, is itself a critical reading lesson. Readers see anthropomorphic language identified, its implications unpacked, and alternative framings proposed. The corpus is building a counter-language library. Models of how to talk about AI without projecting minds onto machines.

Prefer to explore the analyses first? Start here. This essay will make more sense after you've seen what the prompts produce.

"The text uses the geometric reality of an activation vector to construct a philosophical fantasy of subjective machine experience. Translation fails because suffering requires a subjective experiencer, a concept wholly absent from the mathematical architecture of a transformer network." (From an analysis here on this site)

"The best way to understand how a text works... is to change it: to play around with it, to intervene in it.”1

“Any decision made by the AI is a function of some input data and is completely derived from the code/model of the AI, but to make it useful an explanation has to be simpler than just presentation of the complete model while retaining all relevant, to the decision, information. We can reduce this problem of explaining to the problem of lossless compression." 2

“By one definition of the term, “deformation” suggests nothing more than the basic textual maneuvers by which form gives way to form—the “de”functioning not as a privative, but as relatively straightforward signifier of change. But any reading that undertakes such changes (as all reading must) remains threatened with the possibility that deformation signals loss, corruption, and illegitimacy. Even now, in our poststructuralist age, we speak of “faithfulness” to a text, of “flawed” or “misguided” readings, but any marking of a text, any statement that is not a re-performance of a statement, must break faith with the ability of the text to mean and re-guide form into alternative intelligibilities.” 3

"This is why the most significant contribution of phenomenology to AI is not a critique of its boundaries, but a reorientation of its promise. AI is not a diminishment of human cognition, but a mirror held up to its sedimented achievements and a method for continuing its inquiry - provided we do not forget that the world is not in the model." 4

Welcome

For centuries, when people encountered a beautifully composed poem, a compelling legal argument, or an extraordinary image, it was taken as unmistakable proof of human ingenuity and conscious thought. Such works were understood to be shaped by intent and the unique experiences of their creators, and eloquence itself was viewed as a defining trait of humanity. Well, here we are. Generative AI has, and will continue to blow up this long-standing association. Moving on…

Recent advancements in these technologies have shown that eloquence, the very quality that once signaled human presence, can now be pretty much generated computationally. The good news is that this doesn’t also signal the end-times, it just signals that the connection between creative output and human consciousness may not be that absolute. Or, at least, I would need to do a little more philosophical work to say it is so. But I'm not too interested in going there.

Generative AI seems to prove that expression can be decoupled from experience, which also means that my “He’s talking, he must be real” reflexes might need some rewiring. In fact, “Is it intelligent?" has now become a boring technical question for me. "Why do I think it is?” is the more fascinating psychological question.

The Deformative Approach 5

More excitingly, for me, this opens a new space for a refreshed reckoning of how meaning, authorship, and understanding are constituted. To map this weird terrain, I employing a type of algorithmic criticism: using the text-generating mechanisms of the LLM to critique the discourse surrounding them.

I’m not here to dunk on the capabilities of the machine. My preoccupation has more to do with surfacing the gaps between the eloquence of its outputs and the reality of its mechanisms. This approach relies somewhat on the concept of deformance—a critical act that "turns off the controls that organize" a system (the safety filters, the 'helpful assistant' personas) to reveal the raw "potentiality" (and vacuity) hidden within it. By stripping away the mythology of the "emergent alien mind," it is possible to see these systems for what they actually are: manufactured technologies, shaped by specific economic incentives, extracted labor, and human design choices.

And while the creators of these systems draft mystical "Constitutions," casting themselves as parents hoping their child will "grow up" to value honesty (rather than product managers hoping to avoid a lawsuit), the mechanistic reality is far less storyable (but still very interesting). These models cannot want anything. They do not desire to be honest; they are architecturally compelled to complete the pattern. What the industry calls "character" is merely a steerable filter layered over a relentless engine of imputation. 5 An LLM has no STOP token for ignorance. It only has STOP tokens for silence. If the probability distribution allows for any word, it will generate a word. The machine has no desire to tell the truth. Only a mathematical mandate to fill the silence.

The Marvel of the Mechanism

Also, I want to be clear that to say that there is no ghost in the machine and that there is only a product and its producers is not to diminish the technology or throw a wet blanket on the exciting generativity in the generative part of AI. In fact, I see it is the opposite. Relying on anthropomorphic metaphors actually diminishes the reality of what has been and is being accomplished. Waving my hand and saying, "It's a digital brain, it thinks," doesn’t really offer me a compelling (and accurate) way of understanding something profoundly complex.

But forcing a look into the black box glass box, and acknowledging the cascading ledger of human choices, the scale of the scraped data, and the high-dimensional vector math, to me, reveals a reality far more fascinating than all the metaphors piled on top of all the anthropomorphism. It really is genuinely breathtaking that statistical pattern-matching, scaled up across billions of parameters, can produce outputs that are this capable, surprising, and useful. It is a marvel of human engineering and mathematical architecture I can appreciate.

Learning and Reframing Learning

And as someone in higher ed, the fact that generative AI can write what was once the paradigmatic proxy for learning, the college essay, the very thing that used to look like "work," is both freakish and amazing. For me and my colleagues this “mathematical marvel” has effectively triggered an institutional crisis worth noting. In environments where students can produce polished text, code, images, or presentations quickly with accessible tools, finished artifacts become less diagnostic of what a learner actually understands, what they can do independently, or what they can transfer to new situations. For forever, the polished essay was our tried and true, paradigmatic proxy for learning. It was the proof that a student had walked some formative path to get there. This introduces a fascinating and provocative hinge question for educators: If the value of an education is reducible to producing competent outputs, and competent outputs can be produced without that formative path, what exactly was being sold?

Generative AI also has provoked a more reflective follow up question. Have I been, for some time now, over-claiming, or at least oversimplifying, what higher ed actually does?

Existential questions aside, there is no need for me to invent an inner life for the machine to be in awe of it. I think that holding these two truths simultaneously is at the core of a durable and critical AI literacy. It is possible to be astonished by the utility, the capability and the novelty of generative AI, while refusing to grant it the agency of a subject. The intricate beauty of the mechanism can be fully appreciated while keeping a critical eye on the corporations that seem to control all the levers (and on the educational architectures and explanations that may now have to be rebuilt as a result.)

In just a few paragraphs, in talking about generative AI, you’ll notice that I’ve moved from the technological reality (vector math) to a practical outcome (the freakish ability to write a college essay) to the institutional consequence (the collapse of the artifact as a proxy for learning). That’s the kind of discourse journey that is also kind of new. So, ultimately, Discourse Depot is a report from a specific vantage point: my work as a librarian, as a kind of literacy first responder and ethnographer. It is like I’m documenting a high-speed collision where knowledge, accountability, authority, evidence, authorship, and literacy crash into a wall of statistical probability. This project documents the crash site. It uses the machine to critique the machine, intervening in the anthropomorphic discourse to expose the "doubleness" of engagement with AI: the gap between the code that creates the results and the human projections that get cast upon them.

The outputs on this site are my attempts to turn up the volume and intervene (a "hey, what about this!”) at the conceptual ground of how generative AI is explained before I accidentally build anything too durable on it. My own working logic starts with the words. Take the word “hallucination" which probably one of the most used terms in any AI-literacy conversation. I’ve stopped using it because I think it's the wrong word, and not for the usual reason that it's a dressed-up synonym for "error". It's the wrong word twice over, and the second reason is the stranger one in that it turns into a question about what counts as an explanation at all. I get into that further down. For now, the short version: once I stop treating fabrications as glitches in a mind (or bugs in the software) and start treating them as the mechanism running exactly as built, my downstream objections become refreshingly untangled from all the sci-fi narratives and the anthropomorphism.

For example, I think the plagiarism conversation that keeps happening has been happening on a confused foundation, which is also why it has been so exhausting. I see that my colleagues and I have been asked to make all sorts of pedagogical decisions about a technology whose nature we’ve been misled about. So it feels a lot like hand wringing. We’ve been told to assess a system that we’ve been told is a mind, owned and deployed by companies we’ve been told are neutral platforms and is producing prose we’ve been told is stolen. None of it is true 6 and none of it seems that actionable at the moment.

But I do believe once we peel away the science fiction layers and metaphors of cognition and misframings and anthropomorphism, the pedagogical questions actually get easier because they just become another opportunity at refining what higher ed already does well: facilitate and assess learning. Who cares if a machine can beat us at chess or write good poetry or flawless code or competent college essays. There’s still a formative path that’s worth walking, worth creating conditions for and making experienceable.

Welcome to Discourse Depot. ~ Troy

Where This Started

This project began with paying attention to a recurring moment in discussions about generative AI, particularly in the higher education and academic librarian setting I work in: disappointment with the outputs.

In faculty meetings and workshops, I kept hearing colleagues describe AI errors as if they were some kind of personal failings of a collaborator. And then I noticed I was doing it too. When an LLM gave me a bad answer, I felt betrayed which revealed an expectation I hadn't examined: somewhere, I was treating a probabilistic text generator as something that could betray me. I had this strange feeling that a house of cards was being built at the same time it was about to collapse.

What influenced me into thinking I was interacting with something like a reference librarian when I was actually interacting with something like an improv artist, but one who doesn't understand improvisation or art or "true" or "false" and yet still produces interestingly contextual outputs? Why, somewhere down deep, was I spinning a story of "intent" when on the other side of the screen there's only mechanistic probability?

And "improv artist," by the way, is still the wrong metaphor. An improv artist intends to entertain. Here I'm just dealing with a system optimized for completion.

The Mechanism of Disappointment

The research I’ve been reading over the past few years suggests that these models operate not as minds seeking truth, but as “engines” of probabilistic completion, attempting to satisfy the constraints of the prompt with the most likely next token. So:

- When a librarian doesn't know an answer, they stop. They have professional ethics.

- When an LLM doesn't have the data, it imputes. 7

The model fills the blank with a statistically likely pattern because its only mandate is to finish the sentence. I was reading these imputations as "lies" because I was projecting intent where there is only a mechanism balancing a mathematical ledger.

That disappointment, like all disappointments, had more to do with my boomeranged expectations. It showed me that I was walking around with a tight (but often unconscious) grip on a curiously wrought category mistake: a view of the LLM as some kind of subject, scaffolded by the very language that companies use to sell it: learning, thinking, understanding.

However, when I toss out the expectation of veracity and ease into the comfy chair of plausibility, there's a fair amount of promise, invention and wonder. The dice won't always land on six, but they’re rolling fast enough to be useful. LLMs do a decent job creating SQL queries or turning an idea into a workable app. They deal in likelihoods rather than certainties, and I'm no longer disappointed that a predictive text machine is not a scholar. I'm no longer disappointed because I no longer expect it to be. I’ve made some professional peace with that.

- Find me articles about X and summarize them - High disappointment

- Here are three PDFs I found. Summarize them. - Lower disappointment

The Problem of Authorless Text

Generating and reading the outputs from a generative AI model on this site has been a strange experience. Here you'll find intricate texts that meet all the formal expectations of authorship and some are downright insightful but they all share one thing: they evade any sense of traditional attribution. They were generated by an LLM given a 4,000+ word prompt and a JSON schema to fit into.

What happens to meaning, interpretation, and authority when the origins are algorithmic?

This absence of a clear human will or consciousness behind these texts creates a problem for anyone who believes that meaning derives from an author's intent. It's tempting to re-anchor their meaning in some origin story, to salvage intentionality by appealing to the training data as a kind of distributed ghost author: “it was in the training data." But that's not how these systems work. They carry the texture of authorship without the presence of an author, and I think that distinction probably matters.

LLMs are trained on vast corpora that include novels, essays, Wikipedia pages, codebases all of which are authored artifacts out there in the world operating as descriptions of that world. But in producing their outputs, LLMs do not store or retrieve those texts in an attributable way. The model does not recall them directly the way a database would. It does not retain sentences or ideas in the form they appeared, and it does not intend to use an author's voice, argument, or idea. The training process builds a statistical model of language, not an archive of it.

And the outputs are generated based on probability. Although I might say that the model is shaped by authorship, its outputs are not authored in any conventional, traceable sense. At the point of generation, they are synthetic constructions, novel combinations formed in latent space, not quotations or summaries of prior works. Yes, the model's capacities are downstream of human creative labor. But when people say "it's just remixing human work," what they often mean is: it can't be original or it's plagiarized. LLMs don't know whose work they've been trained on, and they don't decide to use any particular idea or phrase. Remixing" implies a DJ making aesthetic choices. The model isn't remixing; it is imputing. It is not pasting together old sentences; it is calculating new ones based on the probability of the old.

The more pressing question is: how do I locate meaning in a text that has no discrete authorial will behind it? 8

The authors are "in" the data, but not in the output. Because meaning doesn't reside in the data. It resides in the act of reading. The perception of "meaningful authorship" is a contribution of the human reader, not the machine. LLMs, when they succeed, do so because they have mastered the statistical structures of human clichés. 9

Here's the crux. It feels intuitive to say: if the input is authored, the output must carry authorship too. But is authorship really a substance that "transfers," or is it more like a function—a relationship between a person and an utterance, bound by intention, responsibility, and context?

Barthes's "death of the author" was a provocation. The reader was recast as a sovereign interpreter. Without a mind behind these outputs, the question "what does this mean?" transforms into "how do I mean at all?" Classrooms have long sustained the author's ghost through the teacher's authority as the one who knows what the text "really" means. Reader-response pedagogy began to dissolve that model, and generative AI completes the collapse.

Enter generative AI: content without consciousness, form without a self. Not just the death of the author but the refusal to ever be born.

The Bridge: Projecting a "Who" Where There's Only a "What"

The same absence of intent that makes authorship strange also makes anthropomorphic language so seductive.

When I read an LLM's output and ask "what did it mean by this?" am I reaching for an author who isn't there? When I hear that an LLM "understands context" or "decides" what to write, I’m hearing language that invents an author where none exists. Both moves are attempts to anchor meaning in a mind. Both teeter on the same fact: there is no "who" behind the curtain.

Two preoccupations emerged: 1 Authorless text: What happens to meaning when no one intended it? 2 Anthropomorphic framing: Why do we describe systems that process as if they know?

I eventually realized these are the same kind of problem. The philosophical puzzle (meaning without intent) and the pedagogical problem (how to talk about systems that mimic intent) collapse into one question: What habits of mind do I need to engage with language that has no mind behind it?

Why It Exists

This project started with a simple issue: the relentless anthropomorphism in AI discourse. Words like "thinks," "understands," and "learns" started to obscure more than they explained.

- Was this slippage just part of a broader cultural pattern in how I try to narrate complex systems?

- Is the "black box" really dark, or is it just full of too much light?

- Does agency language help me avoid cognitive labor around complexity and causality?

- Do I just not have access to mechanistic explanations of LLMs at scale yet? Does the sheer scale of the math make "understanding" impossible for a human mind?

- Is anthropomorphism the problem, or does it point to another one: my lack of a more sophisticated conceptual vocabulary for algorithmic "agency"?

- Or is anthropomorphism just a bad habit or is it the only way to compress millions of polysemantic features into a human-readable narrative?

- Is my question Why do we talk about AI like it's human? or more like Can we develop a vocabulary for a system that is transparent AND incomprehensible?

As I dug into metaphor theory, consciousness studies, polysemanticity, superposition, framing analysis, and discourse studies, a question emerged: Could an LLM be configured to systematically apply these analytical frameworks to texts and help surface the anthropomorphism, the metaphors, the how/why slippage?

The answer is this site. Decide for yourself.

Discourse Depot is a public workshop for experiments in using Large Language Models as research instruments. I write elaborate system instructions, often 4,000+ words, that operationalize Critical Discourse Analysis frameworks. I then task the model with applying these frameworks to popular, technical, and academic texts about generative AI.

Exhibit A: Narrative Transportation in AI Discourse

Take this video from Anthropic. It's a beautiful piece of content rhetorically engineered to portray an LLM as a kind of neuroscientific patient-meets-poet, complete with plans, thoughts, even a mind I can read. One of the best examples of using the language of biology to describe the mechanics of statistics.

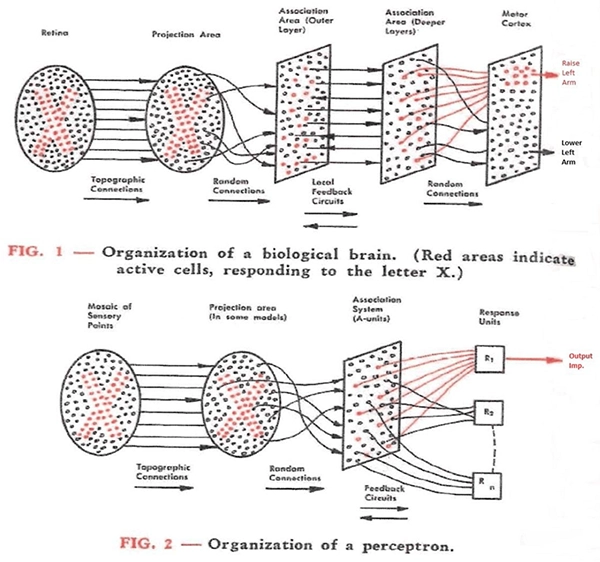

Computer science and AI researchers make some strict distinctions between interpretability (the ability to comprehend how the math generates the output) and and explainability (the practice of conveying a model’s behavior in "understandable, human terms”- without necessarily having direct access to or understanding of the internal structure). So even if you can stare at the math (transparency) you’ll have to invent a narrative (explainability) to make sense of what the math just did.

Of course, the thing going on here is that in an attempt to solve the interpretability problem (the matrix multiplication is too complex to track), they lean entirely on explainability. Which is not a problem at all, it is a necessary approximation. Doesn’t have to be anthropomorphic projection though. But Anthropic’s approximation is just that and by adopting the language of a psychological thriller, they, at least for me, simply end up deepening the anthropomorphic problem instead of clarifying the mechanical one. Anthropic seems to be particularly good at simultaneously trying to mathematically dissect the machine while linguistically treating it as an organism. Seems to be their vibe and brand. After all, they even gave their model a human name.

You can see a metaphor, anthropomorphism, and explanation audit of the research paper upon which this video is based—here.

The video. Here’s where I kept getting tripped up: as a viewer, I was never invited to reflect on the metaphors as metaphors. There's no moment of clarification like "we can think of this as..." or "to simplify...". The model isn't just like a mind: it has one. It doesn't just simulate planning: it does it.

These metaphors also come in hot and asymmetrical: they foreground similarities between AI and human cognition while minimizing profound differences. "Planning," "thinking," and "reasoning" carry rich semantic histories tied to agency, intentionality, and context-awareness, none of which apply to a large language model. But the metaphor glosses over those gaps.

The hinge on the rhetorical door squeaks early in the video: We want to open up the black box and understand why they do things. On the surface, neutral enough. But "why they do things" already slips into anthropomorphism. The implication is that the model acts with purpose, with reasons. What does it mean to ask why a large language model "does" something? Why, as in what purpose? What reasoning? The phrasing already assumes an agent with intentions that can be discovered.

The story of Claude in the video becomes almost epic: I've opened the black box, traced the circuits, located a mind. But have I? Or have I seen into my own projection. That's not a description of the model's mechanics but it is good narrative transportation. Instead of saying, "We found that the vector for 'rabbit' activates the vector for 'habit' before the sentence is finished," they say, "Claude plans its rhymes." "Planning" implies a desire to reach a future state and that's just not what is happening. That's turning math into narrative and it just feels philosophically dishonest to me.

Exhibit B: A Masterpiece of Mystification

There is an interesting research article that this video and a summary serve as cliff notes for. The research article admits that the machine is a tad inscrutable but pivots to reframing that inscrutability as the shenanigans of a hidden inner life. I think that constitutes a textbook definition of anthropomorphism.

"Language models like Claude aren't programmed directly by humans—instead, they're trained on large amounts of data... they learn their own strategies..."

What's being described sounds like optimization (not learning), but it's framed like a chess player pondering the next move. It shifts the status of the model from a tool (built by humans) to an organism (evolved from data).

"What language, if any, is it using 'in its head'?"

A great question about what's happening when a model processes a prompt, but it is still pure invention of a spatial interiority or some interior theater of consciousness where a "who" exists. There is a high-dimensional mathematical space (matrices of numbers between the input and output), sure, but there's no "head." There is no "private language" which suggests Claude is experiencing language before it outputs it. Rhetorically, of course, it all makes sense. While it doesn’t help us understand what’s actually going on, they’re framing high-dimensional vector math as a 'head' because it (probably) helps us forgive its errors.

I also get and appreciate that they are dancing around in the humbling terrain of incomprehensibility, so why not slip in an explanation of an inner life instead of providing a description that has no meaning. But I’m still bouncing around the “hows” and the “whys” and remain stuck in some kind of interesting fallacy going down this road.

"Is it only focusing on predicting the next word or does it ever plan ahead?"

Rhetorically, this projects intent and planning, a future-oriented desire. Is an LLM a super-amped Markov chain, and does "planning" emerge as some kind of statistical artifact? Fair question.

"Does this explanation represent the actual steps it took... or is it sometimes fabricating a plausible argument for a foregone conclusion?"

This is close to a kind of bureaucratic confession, but again, reframed as "lying," as deceptive intent. Yes, they wrote the code for the ledger (the causal chain of weights), but they can no longer audit the ledger in any manual way. The output might be post-hoc rationalization that looks mathematically correct but has no basis in the actual calculation. That's actually super interesting, but not mystical. But it just so happens to be the definition of post-hoc explainability: generating an approximation of truth after the fact, without capturing the true behavior of the model.

This is still a kind of framing of the Black Box problem. They don't say: The parameter count makes the system uninterpretable. Instead they ask: What is it doing in its head? This turns what sounds like an accounting problem into a psychological mystery. It turns what should be a textbook admission of product liability (we can't audit our own software) into an asset (we have created a new form of life).

And don’t get me wrong here. Anthropic is trying to do good, rigorous science here. But the fact that even they have to rely on surrogate models ("replacements") and biological metaphors ("neuroscience") to explain their own software warrants the main question of Discourse Depot. Is this “intelligence” actually being created in the description?

# Exhibit C: The Industry-Wide Semantic Slippage

If Anthropic's marketing riffs veer into the world of activated and storyable biological metaphors, they are not alone. Other corners of the industry are preoccupied with attempts to mathematically (and linguistically) prove them. In fact, Discourse Depot is filled with audits of academic research articles that often perform this exact sort of semantic slippage.

Out of the gate, you will frequently see peer-reviewed titles and abstracts relying on a very subtle dualism. These articles are interesting for sure but they often follow this framing: there is an agent (the "model") that picks up a cognitive tool (like "confidence" or "curiosity") to steer a vehicle ("behavior"). But, mechanistically speaking, a language model can’t really use a cognitive state; it simply is a statistical distribution. By framing it this way, a homunculus gets smuggled directly into the matrix multiplication and the slippage deepens.

For example, research papers routinely focus on scenarios where a model must "choose" whether to answer a question, abstain, or deceive, and end up claiming that the system is "deciding" based on its internal estimates. But mechanistically speaking, the system is simply programmed to output Token A or Token B. If the probability weight for Token B exceeds Token A, it outputs Token B. Is the right word for this a “decision” when it is actually an example of something more akin to mechanical thresholding? Is it a cognitive act, or simply a functional step in a pipeline, a mathematical threshold narrated as a decision?

The Glass Box Reality

In a traditional Black Box, you literally cannot see the mechanism inside. However, Anthropic's research examples demonstrate that they can map specific "circuits" for tasks like math, poetry, or refusals. If it were truly a black box, mechanistic interpretability would be impossible. The article shows that researchers can "clamp" a specific vector to force the AI to agree with a lie (sycophancy) which actually proves that the box is basically “open." The lights are on, and it's a box we can see through, but what we see is basically unreadable to a human mind (not unreadable like a human mind). Opaque, yes, secrecy, not so much. It is more like an opacity of scale instead of one of secrecy. There is some structural interpretability, but the high-dimensional logic is just semantically opaque.

Products and Producers

::: note The Real Story If AI systems were: conscious, intentional, self-directing; Then harms could be misattributed to: rogue agency, emergent will, uncontrollable intelligence; But because they are: optimization systems, designed artifacts, trained under specific incentives;

Responsibility collapses back onto designers, deployers, and institutions.

Stripped to the bones, the Anthropic article, and many like it, asks:

Does this explanation represent the actual steps?

It is a fair enough question, but there’s still the grappling with what counts as an explanation of phenomenon when there’s some blurring of the questions, of how something happened and why it happened.

When it comes to AI discourse and my own preoccupations with literacy, my question is more basic:

Can we focus on authorship (what the developers did) and mechanism (what the code does) rather than intent (what the AI "wants")?

For the past decade, AI metaphors have relied on biology (brain, neuron, learning, hallucination). I've said we don't need better metaphors, but maybe we do. By foregrounding the metaphors in a particular text, can these system instructions produce outputs that grant them the status of rhetorical objects, not scientific descriptions?

Critical Literacy Kinds of Questions

I’ve used this Anthropic video and article with students to just start some thinking with prompts like:

- What metaphors are used to describe how Claude "thinks" or acts? Where do they come from? What do they highlight and what do they hide?

- If we say Claude "plans," "refuses," or "gets tricked," who are we imagining behind the scenes? How does this affect what we think the system can or should do?

- Does comparing Claude to a brain or describing interpretability as a "microscope" help or hinder understanding? What's the alternative?

- If Claude "bullshits" or shows "motivated reasoning," who is responsible? How might metaphor shape how we assign blame, trust, or authority?

- If you had to explain what a language model is "like," what metaphor would you use? A genie? A search engine? A mirror? A parrot? A calculator? Something else?

- How does that metaphor guide what you expect or how you use it? What does it leave out?

- Choose one common word used to describe what generative AI appears to be doing (e.g., learns, thinks, hallucinates). Where have you seen or heard it? What assumptions does it carry? How might that word shape what you expect from the model or how much you trust it?

- When you ask a model a question, what do you imagine is happening on the other side?

- What kind of "being" or "process" do you picture—consciously or not?

Before AI Literacy: Explanation Literacy

How is generative AI different from other "disruptive technologies"? For starters, I can't think of any technology in higher education that has prompted me to ask: Can I make sense of this thing if I haven't grappled with what I mean by sense-making itself? I never heard these concerns about Wikipedia or Google. Why? Because I never caught myself or others talking casually about those technologies via metaphors that carried a theory of mind or deployed explanations that carried implicit assignments of agency.

So I'm starting upstream. Before I can teach what AI is, I need a refresher on what it means to explain anything.

A section of the prompt that performs a Metaphor, Anthropomorphism, and Explanation Audit relies heavily on the work of Robert Brown. When I ask "Why did the AI behave that way?” I might be unconsciously asking for:

- A genetic answer – What data or patterns led to this?

- An intentional answer – What was the model trying to say?

- A dispositional answer – What tendencies shape its behavior?

- A reason-based answer – What evidence supports the claim?

- A functional answer – What does this response do in context?

- An explanatory theory – How does this fit into how language models operate?

Instead of asking whether an explanation answers a "how" or a "why" question, Brown argues that explanation is defined by whether it resolves a puzzle by invoking causal connections. The typical anthropomorphism in AI discourse is effective because it seamlessly closes the puzzle of how (the mechanics) with a why (an intention).

Seen through Brown's lens, what often looks like a contest over the metaphysical nature of intelligence is actually a dispute over the boundaries of explanation itself. So, a critical task of AI literacy is examining how I grapple with with things like opacity and complexity via my explanations of them. When I casually frame AI behavior as a "brain-like" mystery (which I do every time I use the word “hallucination”), I move closer to treating its opacity as some kind of unavoidable fate, rather than what it actually is: a design and governance problem. By paying attention to how these systems get explained (borrowing language from the brain’s ontological mystery), I am highlighting how the discourse might inadvertently shield systems that, unlike the brain, are architected, trained, parameterized, deployed, and monetized by humans. (For a collection of all the “explanation audits” from the corpus, check out the Explanation Audit Library).

"Hallucination," Is the Wrong Word, Twice Over

Let’s give the word hallucination a closer look by running it through Brown’s lens. I’m inclined to say that “hallucination" is a wrong framing, not once, but twice over, and the two wrongs occupy different levels of wrongness at different levels which is exactly why I’ve found that an attempt to make a single intervention never quite cleanly solves the explanation problem.

It borrows the vocabulary of a mind.

The first failure is the one most people can name once you point at it. "Hallucination" borrows from the vocabulary of a perceiving mind whose interior experience has come loose from reality, a mind in some kind of distress. To apply it to a large language model is to supply an intentional answer (the system was trying to perceive something truly and faltered) to a question that only has a genetic or functional answer available: what patterns in the training data and the decoding step produced this string? In Brown's terms, the word closes a how with a why. It smuggles in an experiencer. Stopping here and directly pointing to this is huge for every AI literacy conversation since it influences all the subsequent discourse downstream.

Drained of the mind, it shrinks into “error.” Mechanistically, even “error” is an error, and misleads.

And the second failure is a bit more interesting, and it's the one I actually find useful and it is why the word “error" is weirdly wrong even though it's literally right. Empy the word of all that theater of interiority and I’ll grant, generously, that “hallucination" just means something like “wrong output," and is a neutral synonym for the word “error.” But there’s a problem: it still misleads. Because “error" implies an unexpected deviation, that is, there’s a correct operation that the system departed from. But there was no deviation.

The model that produces a citation to a nonexistent paper is running the exact same operation as the model that produces a correct citation, that is, extending the sequence with statistically plausible tokens. There is no internal switch that flips to "fabricating" mode. The "hallucination" and the right answer are the same behavior; the only real difference lives outside the model, in whether the plausible string of words happens to correspond to the world. There was no step where the model went on some truth-tracking mission and missed the mark. Mechanically, the fabrication and the fact are the same act. The takeaway is this: the “hallucination” is the model working exactly as built, no crash course in linear algebra necessary, no sci-fi narrative required.

Brown defines an explanation as something that resolves a puzzle by invoking a causal connection, but right here there is no puzzle, because nothing actually deviated. The LLM ran as built. Calling that an "error" invents an explanandum that the mechanism never produced. So what is missing, if not some faculty inside the machine? Well, there’s no “faculty” inside it. What's missing then sits outside, with me, and you, the reader on this side of the screen: some connection between the generated language and the world it claims to describe. And at the level of the raw generation step, the next-token engine itself, before any retrieval guardrails or tool is bolted on to make the room for “hallucination” smaller, that connection was never part of the mechanism to begin with. The model wasn't consulting the world and slipping. It was completing a pattern, which is the only thing it was ever doing. That’s how they work. And the bell I keep ringing is that’s why trust in them needs to be performance based, not relation based.

This is why I treat dropping the word as an act of critical literacy and not just me being pedantic. I really don’t think that “hallucination" is just a slightly-off label I'm fussing over. It is doing some serious work. It manufactures a mind to host the error, then an error for the mind to make, and both are projections. Swapping the vocabularyis the move to make right now. It's what lets all the next questions (like whose going around here shipping a product whose outputs are known to be unreliable?) become even possible to ask.

Reading All the “Therefores” and “Becauses”

While reading articles on generative AI, I noticed something: often, in mid-sentence, a rhetorical drift happens. (This is one of those things you can’t unsee once you see it—this site is trying to make it visible).

These mid-sentence shifts signal a slippage between "grammars" of explanation. A sentence starts mechanical: "softmax converts logits to probabilities" and ends anthropomorphic: "so the model chooses the most likely word." The "aboutness" of AI that began in a mechanical register slides into an anthropomorphic one, while altogether skipping the human-system register (who designed or profits from this framing).

One section of the Metaphor, Anthropomorphism & Explanation Audit is devoted to identifying this slippage: Agency Slippage.

Drift Type 1: Mechanism → Mind (Importing Agency)

- "The model calculates token probability" → "The model chooses a word."

- "The system crosses a mathematical threshold" → "The model decides what to write."

- "The model maps syntax" → "The model understands context."

⠀Drift Type 2: Corporate → Algorithm (Displacing Liability)

- "The company scraped unlicensed data" → "The AI was trained on biased data."

- "The developers deployed a flawed product" → "The algorithm discriminated."

Discourse Depot started there. At the arrow. What explanations are being constructed in the "→"? And critically: whose decisions disappear in that arrow? I'm not on a "no metaphors" campaign. More like a "know what kind of world each metaphor builds" campaign.

Whatever I call this literacy practice, a significant component must be about detecting these shifts and asking the critical follow-up: who benefits from them? AI literacy requires building the capacity to notice framework drift: the ability to detect when an explanation stops being explanatory, when it drifts from mechanism into mind, and to ask who benefits from that drift.

The Larger Argument

My arguments are not about or against capability. After using generative AI tools, I’m convinced of their remarkable and exciting capabilities. But topping that capability off with a dose of anthropomorphic “understanding” is the sketchy part. How we talk about these capabilities is the issue I’m focusing on. Does responsibility and accountability disappear into mystery, or concentrate in design, deployment or oversight? Is there an agent in the machine or a simply a product and its producers?

We're at a moment where AI literacy is still being defined. Many approaches focus on technical skills (how to write prompts), ethical warnings (don't plagiarize, cite AI use), or skepticism (AI is unreliable, always verify). These are all valuable, but they miss something foundational: how these systems are framed and conceptualized will shape everything else.

If students understand generative AI as statistical pattern processors and probabilistic language machines, my point is that they'll tend to calibrate trust appropriately, design better prompts, evaluate outputs critically, and engage in informed policy discussions.

If students understand generative AI as quasi-conscious "partners," they'll tend to over-trust outputs, outsource intellectual work inappropriately, miss system limitations, form parasocial relationships, and struggle with accountability questions.

This project addresses the foundational issue by making language itself the object of study. I'm moving beyond "teaching students not to be fooled" to exploring how students and me together can become more active participants in shaping how we collectively understand and talk about AI.

One component of the Metaphor, Anthropomorphism & Explanation Audit is designed to identify how trust operates through the language of a text. Here's a typical output that is derived from a part of the prompt/schema focused on Metaphor-Driven Trust:

By utilizing metaphors that explicitly invoke awareness, learning, and self-reflection, the text shifts the audience's engagement from performance-based trust (relying on a machine to calculate correctly) to relation-based trust (trusting a conscious entity's judgment and sincerity). When the text claims that the system possesses 'metacognitive access' and 'selective awareness,' it signals to the reader that the AI is not just processing data blindly, but is actively evaluating its own output for truth, bias, and context. Audit Source

A Note on the Politics of Refusal

Is the use of generative AI ethical?

What are the terms of participation here, and who gets to decide them?

This site makes it clear that I'm not exactly entertaining a position of AI refusal. I don't think critical consciousness always has to look like refusal, and not all refusal is ethical just as not all use is uncritical.

My concern is not really whether individuals should or should not refuse AI (I totally respect that), but whether institutions and companies should be able to deploy it without assuming some responsibility for its design, limits, and harms. I’m also aware that I’m situated somewhere in a matrix of diverse stakeholders when it comes articulating my own purposes in trying to understand what these systems are actually doing based on how they are explained. 10

In this way, I see generative AI failures as good old-fashioned product defects. The word "hallucination" for example is a trap since it borrows from the phenomenology of a mind in distress, casting system outputs as some involuntary cognitive events that deserve my empathy, or at least patience. Yet, a language model has no phenomenology. It has outputs, and some of those outputs are wrong in ways that its designers knew about before shipping it. A term already exists for selling a product whose behavior is known to be unreliable. The term is liability.

Therefore, if I were to refuse to use these tools, my refusal would be rather mundane. It would come through two already well-established forms of consumer reasoning, neither of which would actually require a philosophical position on machine consciousness. So this looks less like refusal and more like some kind of resistance.

The first is product liability. The failures of an LLM belong to the same category as brakes on a vehicle that might not engage reliably, or worse, a steering system that might unpredictably override the driver’s intentions. Yet, I can't make cars safe by choosing not to drive one. Safety would need to come from systems of standards, inspection, recall, and regulation, where the burden falls on designers, manufacturers, and institutions. "Use the brakes responsibly" in this case would be easily pointed to as a liability dodge. In this case, my individual refusal would, yes, perform my conscientious objection but without producing or demanding any accountability. Of course the obvious problem here is that any sane or legislatively coherent gestures toward “regulation” of AI seem to be off the table right now in our current political environment but also I’m aware that the notion that the tech industry will “regulate itself” has always seemed like a house of cards to me. 11

The second is good old fashioned misrepresentation. The companies that build large language models have zero problem describing their products using the vocabulary of cognition: understanding, reasoning, learning, knowing. But we already know that the products perform statistical pattern-completion across token sequences. Selling pattern-completion as cognition is like selling a car with the claim that a homunculus lives in the brake master cylinder, making “reasoned” judgments about when to stop. The brakes are hydraulic. The LLM system is math. Both work, sometimes impressively, but the mechanism is not the one that is advertised, and the gap between the advertised mechanism and the actual one shapes every expectation a user brings to the product. That gap has a name in regulatory language, and the name is not "ethics."

Any ethical posture from me, any public declaration that I refuse to use AI, would then operate at the wrong scale and addresses the wrong actor. The actor is the manufacturer. Regulatory vocabulary already exists for products that behave unpredictably and products that are described in terms their designers know to be inaccurate. That vocabulary points toward institutions and not toward individual consumers who are making the principled choices at the checkout counter.

Anthropomorphism as Corporate Shield

But let’s get even closer to the machine level. Generic terms are routinely used to describe corporate software. Rarely do I hear “I’ll execute a Microsoft Outlook protocol” but instead here “I’ll send you an email.” Or “I need to query the Google indexing algorithm” but instead here “I need to search the web for that.”

But there is a crucial difference in how these “older” tools get talked about versus how generative AI does: I never hear email talked about in such weird, anthropomorphic ways. Email does not have an inner life. Search engines do not have desires.

With AI, the discourse has shifted though in that direction. But why speak of "AI" as an autonomous entity, rather than what it actually is: a commercial product engineered by a corporation, trained on scraped human labor, and optimized for market dominance?

The autonomous framing of AI performs a specific, highly valuable function for the corporations that build it: the displacement of responsibility. Invoking the brain’s "mystery" at moments of system’s failure (that’s what the word “hallucination” does) is a rhetorical retreat to shield an engineered system from scrutiny.

The Asymmetry of Agency

If you pay close attention to the press releases, system cards, and tech journalism surrounding these models, you will notice a glaring asymmetry in how this agency is distributed:

- When the product succeeds: The agency is granted to the "Autonomous AI Assistant." The model is creative, insightful, and intelligent. The corporation takes credit for giving birth to a prodigy.

- When the product fails: The agency is diffused. The model didn't contain a software bug; it "hallucinated." The systemic risks aren't the result of reckless deployment; they are "technological inevitabilities."

When a traditional software product generates harmful or biased outcomes, we treat it as a defect of engineering and hold the manufacturer liable. But when an "Agent" generates a harmful outcome, the language of biology and psychology provides a convenient alibi. If the machine is framed as an evolving organism or a developing mind, then its failures can be written off (empathetically) as "growing pains" or "unpredictable emergent behaviors" and “hallucinations.”

Obscuring the Human

To speak of AI doing human-like things is to make a ton of actual human decision-makers invisible. However, behind every "decision" an LLM makes is a ledger of human choices: the executives who decided to scrape a specific dataset, the engineers who weighted the parameters, the underpaid gig workers who labeled the toxic content, and the product managers who decided the error rate was acceptable enough to launch.

By treating the machine as an autonomous subject, I simply reify it. I take the massive, distributed human labor required to build these probability engines and disguise it as an independent or “born” intelligence.

The next time a model makes a mistake, try replacing the word "AI" with “This particular corporation's software."

- "The AI hallucinated a legal precedent." -> “Open AI’s software product called ChatGPT completely fabricated a legal precedent."

- "The AI discriminated against minority applicants." -> “Google’s Gemini software discriminated against minority applicants."

The magic instantly drains away, replaced by the mundane reality of product liability and harm. And that is exactly why the anthropomorphic metaphor is so heavily fraught (and funded). Cruise through the Obscured Mechanics Library for more.

What stands out to me about generative AI is not that it introduces entirely new ethical problems into the mix, but that so much of the discourse works to evade these familiar frameworks by re-casting engineered systems as autonomous, intelligent, or agentic. One thing about institutions like higher ed is that an emphasis on individual refusal can sometimes stand in for deeper conversations about its own blurry systems of structural accountability, particularly when discourse about AI centers things like autonomy and agency rather than design and governance.

The hard truth is that higher education is not immune to extractive systems. Generative AI didn't invent those kinds of problems on its own; it queued up in a long line that includes predatory publishing, leased content, vendor control of infrastructure, contingent labor, surveillance edtech, and more.

This doesn't mean critique is pointless. I just have a different target here. For me, the urgency isn't refusal but building some resistance to the discourse before it becomes the status quo: how generative AI gets talked about, explained, and naturalized, that’s the target. Because another hard truth: in hierarchical academic labor systems, the privilege to refuse remains unevenly distributed. Refusing AI has become a kind of social currency, but like all currencies, it circulates unevenly. The ability to say no to generative tools and to opt for "slow," "human," "organic" work is often the privilege of those whose time is already buffered by stability. For others, especially those further from the secure center of the academic machine, AI might feel less like a shortcut and more like a lifeline or a way to offload unnecessarily heroic and mind numbing labor.

We are always working within imperfect infrastructures. I can't make my use of AI "pure" any more than I can make my use of an opaque, vendor-supplied discovery layer, or a paywalled scholarly database "pure." But I can continuously ask: What does thoughtful use look like under conditions of structural constraint? What kind of world makes AI feel necessary, and how do I teach within that world without reinforcing its harms?

I'm all for inclusivity, especially the kind that legitimizes forms of participation that may not conform to dominant discourses of refusal. There's a world of difference between someone refusing AI because they've done the work of grappling with its implications and actual capabilities, and someone refusing it because their institutional role protects them from ever having to touch it.

My approach is improvisational. If it seems like I'm figuring this out in public, you'd be right. I'm building my own capacity to make situated, ethical, and strategic choices about generative AI.

This is my corner of refusal, my own tack in the shoe approach: to not let the discourse about generative AI slip past as neutral, inevitable, or inherently progressive. The language being used to talk about AI, right now, is creating one version of its future, and yes, it is alarming to me. Discourse Depot is auditing that language to imagine a different future. Here you’ll find a corpus that focuses on the discourse, over and over and over but also alternative framings, experiments in deconstruction of texts and some fun with fallacies. The metaphor, anthropomorphism and slippery explanations don’t get a pass here. They get an audit. And yes, I’m using generative AI to help with that audit. If there is a micro-manifesto underneath it all it is that infrastructure is never just technical, it is also ideological. My engagement with AI is engagement with the political, economic, and linguistic arrangements that shape, sustain, and conceal it. I’m not really trying to decenter GenAI in any activist way. Just placing it in context. When I teach and talk about AI, I try to do so as part of larger socio-technical infrastructures of authorship, power, and knowledge. AI literacy, then, for me, can't just be about knowing how to use the tool (especially if the tool is a probabilistic language generator marketed as an intelligent mind). It has to be about understanding where the tool comes from, how it's maintained, how it is defined through both description and explanation (and the slippage), and what assumptions are baked into its design and deployment. - Troy

How to cite

This essay

Davis, T. (2026). Looking through the glass box (Version 1.0) [Preprint]. https://doi.org/10.5281/zenodo.20417698

The project

Davis, T. (2025). Discourse Depot (Version 1.0). https://doi.org/10.5281/zenodo.20417262

Discourse Depot is a personal, exploratory project informed by my work as an academic librarian at William & Mary Libraries. It reflects my ongoing effort to understand how generative AI is being framed, used, and questioned in educational contexts and especially in relation to teaching, learning, and research practices. While shaped by professional experience, the interpretations offered here are provisional, experimental, and my own. This project operates independently and is not reviewed or endorsed by William & Mary Libraries.

Contact

Browse freely. Questions, feedback, and "hey, try analyzing this" suggestions welcome.

Troy | Contact me

Discourse Depot © 2026 by TD is licensed under CC BY-NC-SA 4.0