Metaphor, Anthropomorphism & Explanation Audits

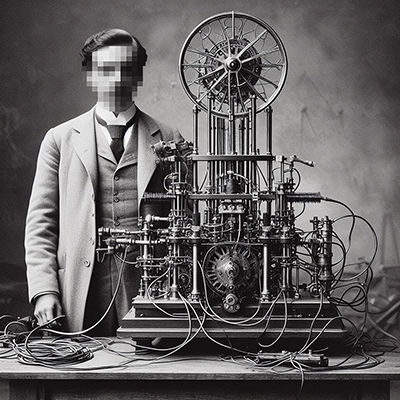

Analyzing how cognitive metaphors—“hallucination,” “learning,” “reasoning”—construct the illusion of mind and obscure the mechanistic reality of AI systems. This is the core work of Discourse Depot: a sustained audit of the language we wrap around machines that predict text.

View Audits 📓This consciousness architecture relies on a complete conflation of observable output with internal cognitive mechanics. By claiming the AI “understands that others might hold beliefs” or “imagines future scenarios,” the text builds a load-bearing assumption that computational token prediction is identical to subjective phenomenal awareness. If this foundational assumption collapses—if the reader recognizes that the model merely calculates probabilities based on training data without a shred of internal experience—the entire metaphorical system shatters. The biological growth narrative becomes exposed as a mere cover for human-directed hyperparameter tuning and corporate scaling. The sophistication of this framework lies in its emotional resonance; it abandons complex analogical structure in favor of a direct, emotionally manipulative one-to-one mapping that bypasses technical scrutiny.

Resources & Deep Dives

Corpus-level extractions, pedagogical frameworks, and tools for thinking critically about AI discourse.

Corpus Libraries

Thematic extractions across all audits: reframings, source-target mappings, accountability patterns, and critical observations. Queried from the database and consolidated for cross-document exploration.

Browse Libraries →What Survives?

The deconstruction experiment: strip the metaphors and see what remains. Each text receives a verdict: Preserved, Reduced, or No Phenomenon.

Explore →Glass Box Syllabus

A course framework treating machine instructions as scholarly inquiry. Schema as argument. Iteration as metacognition. Provenance as scholarship.

View Syllabus →Educator's FAQ

“Isn’t this just plagiarism?” “Does AI really understand?” A working archive of recurring questions and not so recurring answers.

Read FAQ →Slippage Tools

Interactive learning objects: walk through LLM training and inference step-by-step, seeing the gap between what the language implies and what systems actually do.

Try Tools →Standalone Artifacts

Self-contained HTML versions of individual analyses, packaged as standalone artifacts. A companion site indexing the corpus one piece at a time. Currently the Metaphor & Anthropomorphism Audit framework.

Visit Artifacts →Experimental Frameworks

Side explorations applying LLM-driven discourse analysis to other domains. These are works-in-progress, not the main event.

Political Framing

Deconstructing how political actors use language to shape policy agendas, define national interests, and manufacture consent.

View Frames 📝Critical Discourse

A dual-track forensic/activist analysis of power relations, agency, and structural ideology embedded in corporate and media texts.